What does profile-guided optimization mean?

Some languages have a JIT (Just In Time) compiler available at runtime, that can optimize the executed code depending on current execution patterns. This is, in large part, the cause of the performance of Lua and the JVM. They can start a bit slow, but by accumulating information on actual running code, they make it faster and faster for the current load. PfLua is a great example: the firewall rules are optimized again and again, until the current network traffic is handled as quickly as possible.

When you use other languages, such as C, you usually cannot optimize the application once it is compiled. Except when you use an optimization technique known as Profile-Guided Optimization. From Wikipedia :

Profile-guided optimization (PGO, sometimes pronounced as pogo), also known as profile-directed feedback (PDF), is a compiler optimization technique in computer programming that uses profiling to improve program runtime performance.

It relies on profiling the compiled application, while it runs with the expected, real world load (web traffic, calculations, etc), and feed this profiling information to the compiler. On the next build, the compiler will have more information on which parts of the program are less used, which branches are taken more often, the expected values in a range, etc. Instead of guessing how the program would behave to choose optimizations, the compiler has true information, and can optimize more precisely. There's one issue with the process: you need two compilations and a profiling run to generate the final executable. But it gets easier when you automate it, as we can see in the Firefox build process.

PGO in LLVM

While it has been available in other systems for a long time (Visual Studio 2005, the Intel compiler ICC for Itanium), it appeared recently in LLVM. It has since then been applied successfully to XCode (Objective C, Swift) and LDC, the D compiler.

LLVM has a great feature: it uses an Intermediate Representation code (IR), which is a kind of high level assembly language. It applies its optimizations and machine code generation to that representation. If you make a compiler for a new language, targeting the LLVM IR will give you these features (nearly) for free.

In practice, compiler frontends choose which features they use, so you may not access everything LLVM has to offer. In particular, the Rust compiler, as of now (April 2016), provides a llvm-args option, but that option filters what you can send to LLVM, so we cannot use PGO here.

PGO in Rust

Still, with rustc, you can generate directly the IR, or its binary encoding, named bitcode:

rustc --emit llvm-bc main.rs

# or, with cargo:

cargo rustc -- --emit llvm-bc

The approach I tried here is to take that bitcode, and manually apply LLVM's transformations until I get a compiled executable. This is not really usable for now, especially because I chose an example with very few dependencies. With more dependencies, the compilation and linking will get more complex and unmanageable manually.

LLVM comes with a few commands that you can use to build code manually. The first one is opt, and it applies optimizations and instrumentation on the bitcode file (here, the file target/release/pgo.bc):

opt-3.8 -O2 -pgo-instr-gen -instrprof target/release/pgo.bc -o pgo.bc

The new bitcode file contains code to profile the end application (mainly by counting how often we use each code path). We can now convert that bitcode file to an object file, and link it using clang:

llc-3.8 -O2 -filetype=obj pgo.bc

clang-3.8 -O2 -flto -fprofile-instr-generate pgo.o -L/usr/local/lib/rustlib/x86_64-apple-darwin/lib -lstd-ca1c970e -o pgo

Note: I built my own rustc from source, so your libstd file may not have the same hash. Since Rust (as of April 2016) uses LLVM 3.7, we can use LLVM 3.8's PGO features, since the bitcode format is apparently backward compatible. I use OS X, and Homebrew's LLVM 3.8 has compilation issues, so I needed to build the compiler runtime from source. It's a proof of concept, not production code ;)

We will now run the program we just built, preferably with production data and traffic. It will automatically generate a default.profraw file, containing the profiling information. This file must be transformed to a format that opt will understand with llvm-profdata:

llvm-profdata-3.8 merge -output=pgo.profdata default.profraw

This .profdata file will now be used in the compilation steps:

opt-3.8 -O2 -pgo-instr-use -pgo-test-profile-file=pgo.profdata target/release/pgo.bc -o pgo-opt.bc

llc-3.8 -O2 -filetype=obj pgo-opt.bc

clang-3.8 -O2 -flto -fprofile-instr-use=pgo.profdata pgo-opt.o -L/usr/local/lib/rustlib/x86_64-apple-darwin/lib -lstd-ca1c970e -o pgo-opt

We now have an executable compiled using profiling information. Is it fast?

The benchmarks

The program I tested is a n-body simulation. It was a great test target since libstd is the only dependency, and the load factor depends on a number given as command line argument. Here is a test with time (I know it's not the most precise benchmarking tool, but for a tenth of second precision, it works alright):

$ time ./target/release/pgo 1000000000

-0.169075164

-0.169051540

real 1m22.528s

user 1m22.214s

sys 0m0.173s

$ time ./pgo-opt 1000000000

-0.169075164

-0.169051540

real 1m9.810s

user 1m9.687s

sys 0m0.070s

As it turns out, we gain nearly 15% in running time on this program. Other examples could have less impact, but this is encouraging! So, what happened inside our program?

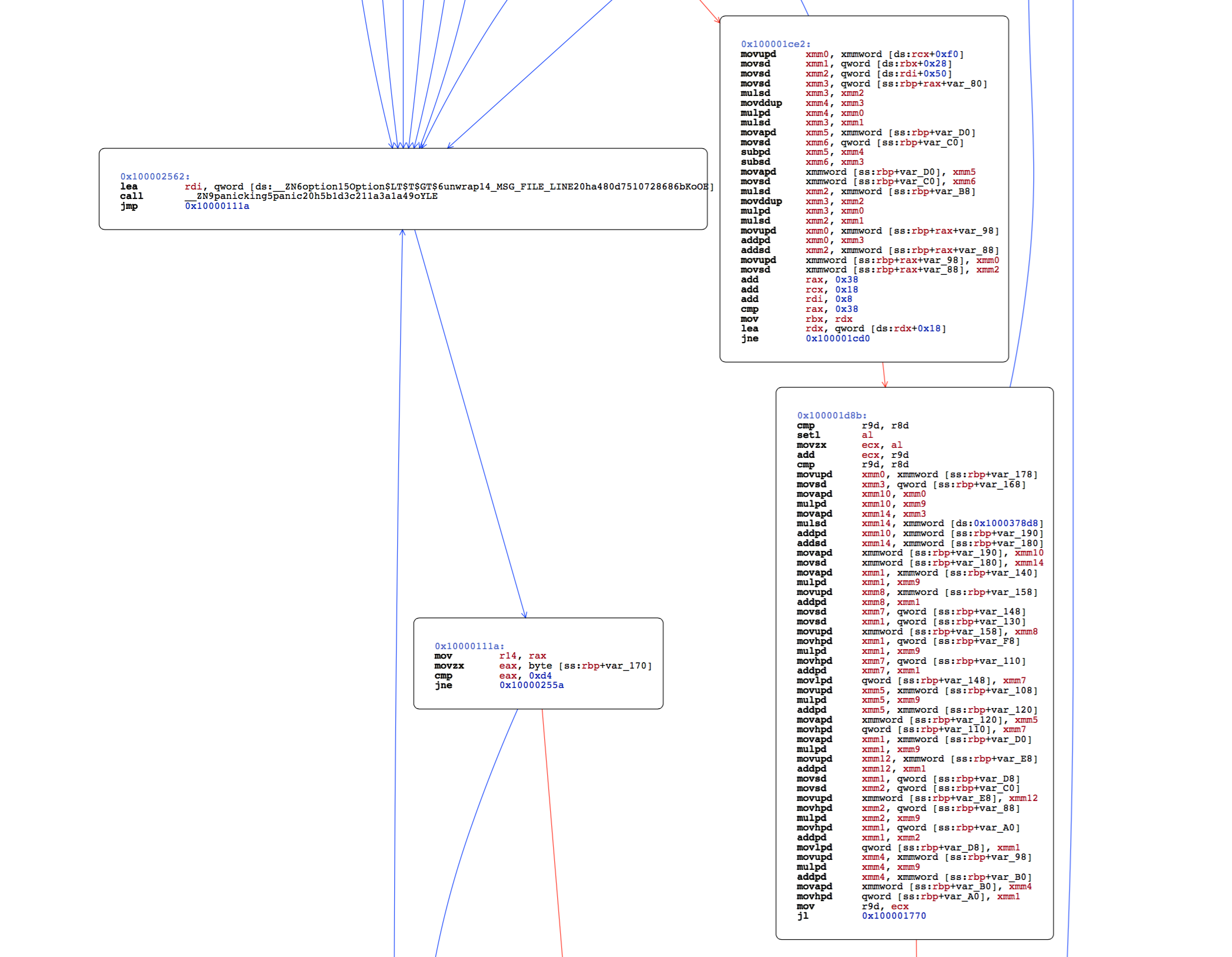

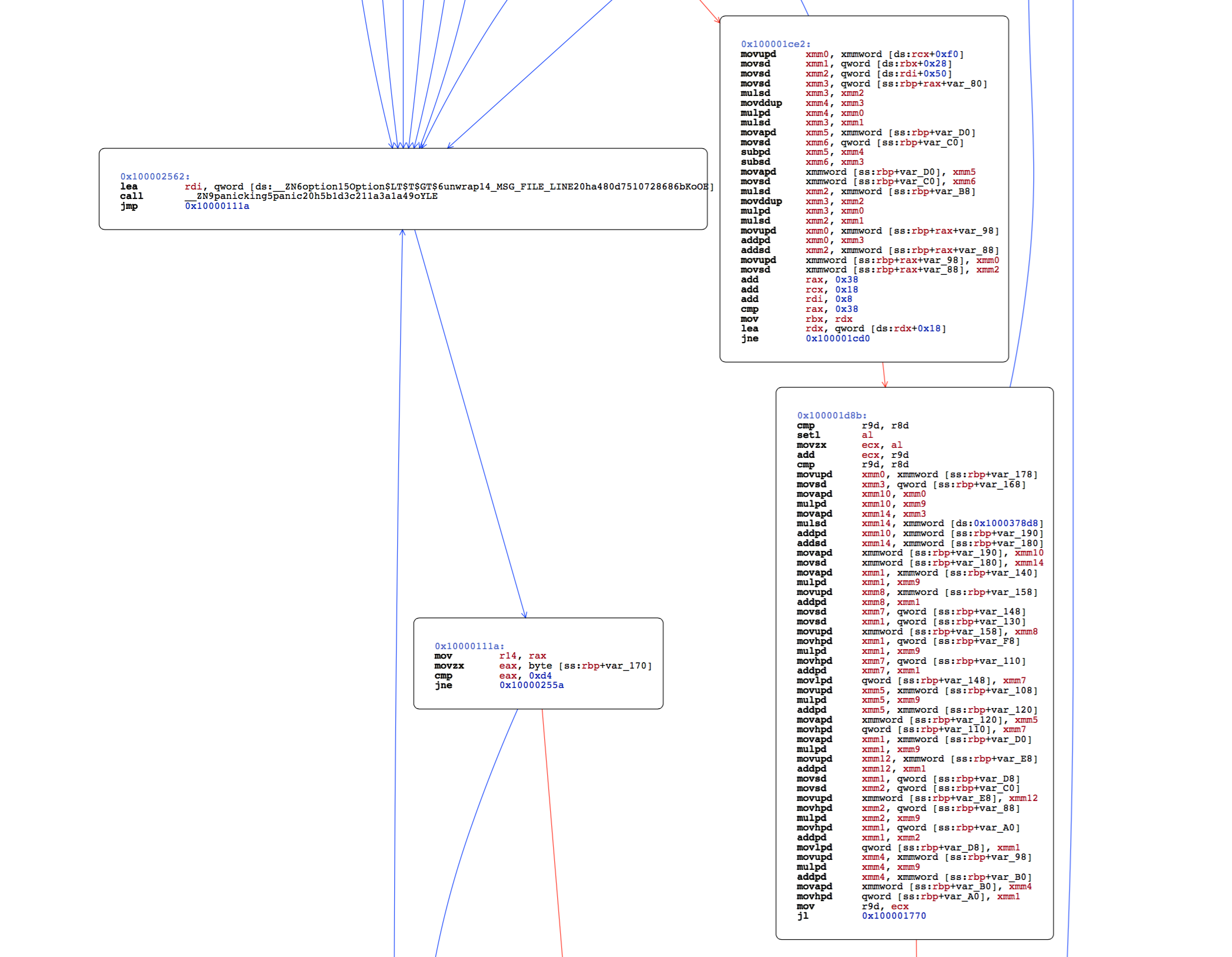

The generated code

I provide assembly dumps of the normal program, generated with cargo --release, and the one optimized with PGO. Mostly, the code has been reordered, probably to fit better in cache lines. You can also consult PDF files with call graphs: normal, PGO optimized.

The whole code for this article is available here if you want to reproduce the results or tinker with optimizations yourself.

This is a proof of concept, demonstrating that profile guided optimization could work in Rust. It is probably worthy of integration into rustc, but there's a lot of work before it could be usable. Still, there's a github issue where you can weigh in, if you would like this optimization in your applications.

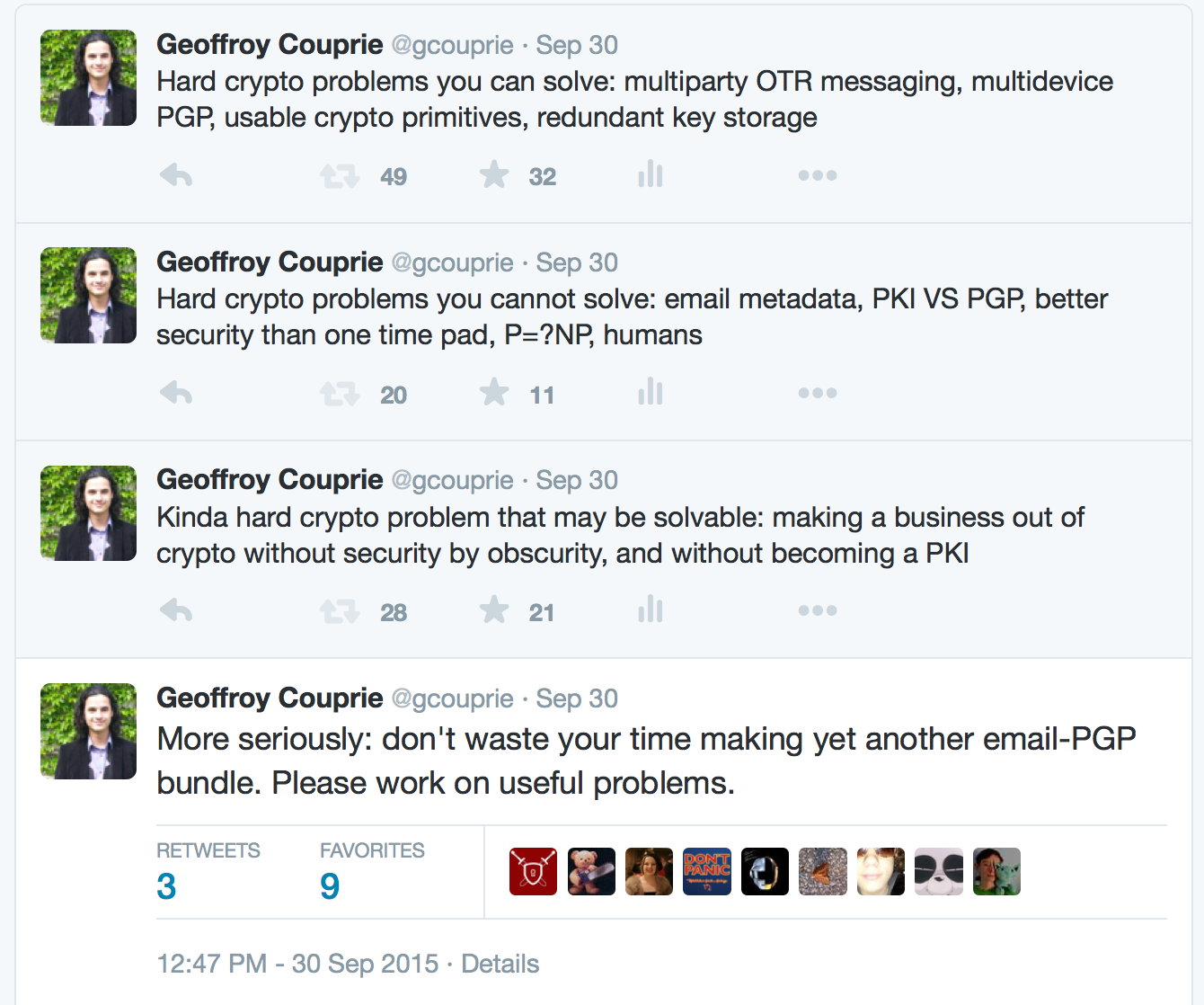

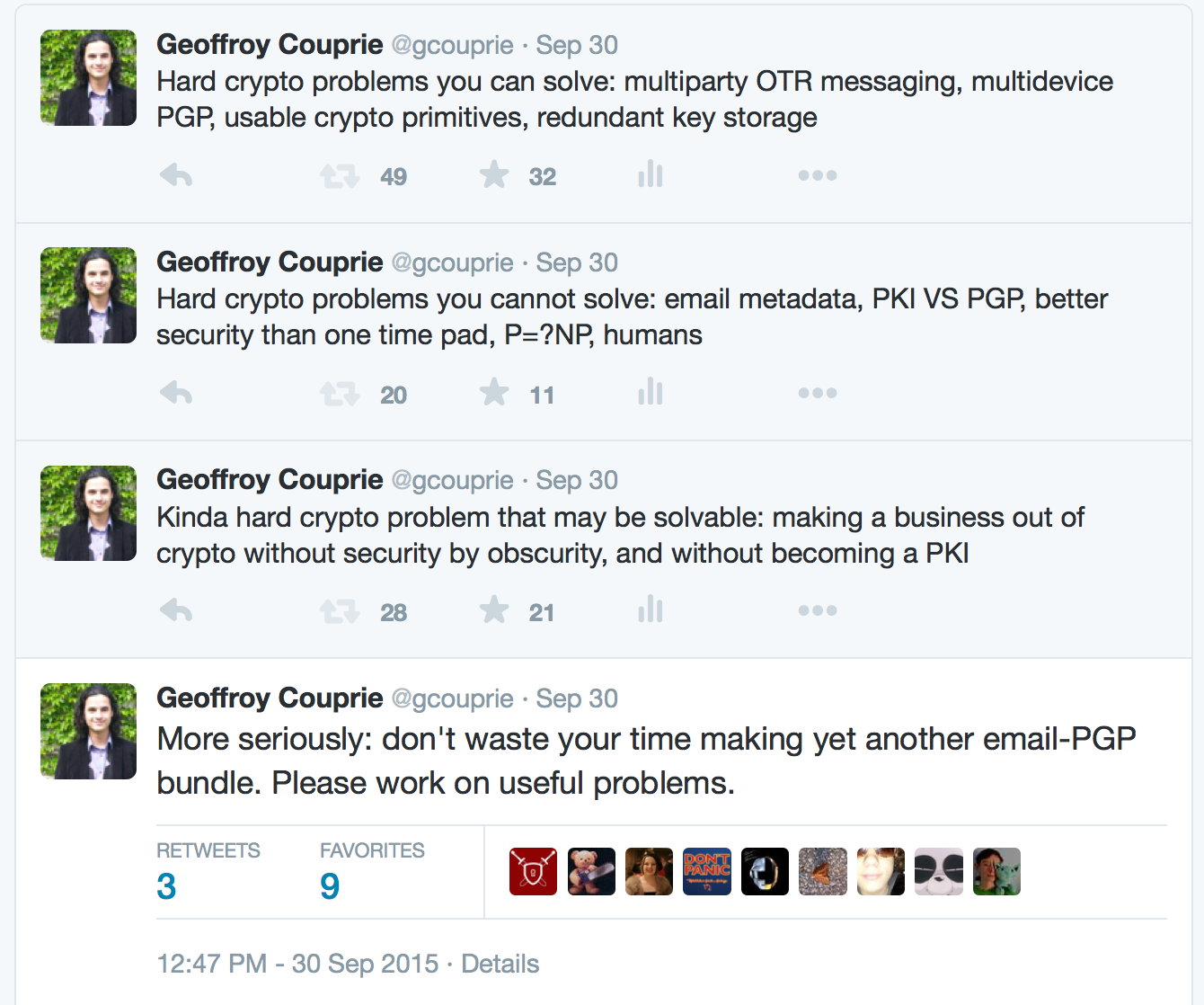

To follow up on the small Twitter rant that got people to explain GPG and OTR to me for a whole day, I'll explain the ideas behind this.

There is always a new project around self hosting email and PGP, or building a new encrypted chat app. Let's say it aloud right now: those projects are not trying to improve the situation, they just want to build their own. Otherwise, they would help one of the 150 already existing projects. Worse, a lot of those try to build a business out of it, and end up making a system where you need to completely trust their business (as a side note, building a business is fine, but just be honest about your goals).

There is always a new project around self hosting email and PGP, or building a new encrypted chat app. Let's say it aloud right now: those projects are not trying to improve the situation, they just want to build their own. Otherwise, they would help one of the 150 already existing projects. Worse, a lot of those try to build a business out of it, and end up making a system where you need to completely trust their business (as a side note, building a business is fine, but just be honest about your goals).

There are things that attract new projects, because the concept is easy to get, and this drives developers right into the "I'm smarter than everybody and can do better than existing projects" territory. But there's a reason they fail.

Making an encrypted chat app is solving the same problem as making a chat app -moving people off their existing platform, onto a new one- and additionally writing a safe protocol. Building a whole new infrastructure is not an easy task.

Making email and PGP easier to use is not only a UX issue. There is a complete mental model of how it works, what are the failure modes, and what are the trust levels. And you add above that the issues of email's metadata leaks, the lack of forward secrecy, the key management. Even with the simplest interface, it requires that the user builds that mental model, and that she understands other users may have different models. This is pervasive to the way PGP works. It has always been and will always be an expert's tool, and as such unfit to be deployed massively. You cannot solve infrastructure problems by teaching people.

You cannot solve infrastructure problems by teaching people

There is a pattern here: people want to build tools that will be the basis of safe communications for the years to come. Those are infrastructure problems, like electricity, running water or Internet access. They cannot be solved by teaching people how to use a tool. The tool has to work. They cannot be solved either by decentralization. At some point, someone has to pay for the infrastructure's maintenance. As much as I like the idea of decentralizing everything, this is not how people solve serious engineering problems. But the difference with other types of business is that infrastructure businesses are dumb pipes. They don't care what's running through them, and you can easily replace one with the other.

There is a strong need for "private by default", authenticated, anonymous communication. This will only come if the basic building blocks are here:

- multiparty Off-The-Record(or other protocols like Axolotl): forward secret communication currently only works in two-party communication. Adding more members and making the protocols safe against partitions is a real challenge

- multidevice PGP (or alternate message encryption and authentication system): currently, to use PGP on multiple devices, either you synchronize all your private keys on all your devices, or you accept that some devices will not be able to decrypt messages. This will probably not work unless you have a live server somewhere, or at least some hardware device containing keys

- redundant key storage: systems where a single key holds everything are very seducing, but they're a nightmare for common operation. You will lose the key. And the backup. And the other backup. You will end up copying your master key everywhere, encrypted with a password used infrequently. Either it will be too simple and easily crackable, or too complex and you will forget it. How can a friend or family access the key in case of emergency?

- private information retrieval: PIR systems are databases that you can query for data, and the database will not know (in some margins) which piece of data you wanted. You do not need to go wild on homomorphic encryption to build something useful

- encrypted search: the tradeoffs in search on encrypted data are well known, but there are not enough implementations

- usable cryptography primitives: there is some work in that space, but people still rely on OpenSSL as their goto crypto library. Crypto primitives should not even let you make mistakes

Those are worthwhile targets if you know a fair bit of cryptography and security, and know how to build a complete library or product.

But for products, we need better models for infrastructure needs. A like Tahoe-LAFS is a good model: the user is in control of the data, the provider is oblivious to the content, the user can be the client of multiple providers, and switching from one to another is easy. This does not make for shiny businesses. Infrastructure companies compete mainly on cost, scale, availability, speed, support, since they all provide roughly the same features.

Of course, infrastructure requires standards and interoperability, and this usually does not make good material for startup hype. But everyone wins from this in the end.

29 Aug 2015

•

General

I'm getting less and less satisfied with Twitter to exchange thoughts. The 140 characters is not the obvious problem, since you can chain messages easily. The issue is that those thoughts are ephemeral. This medium does not optimize for smart discussion with relevant people, but for quick wit from currently available people, before being dumped under a stack of comments on the latest news. The retweeting does not help much, since the primary reason for retweeting are 1. it's funny 2. it is shocking 3. it's inspiring, and long last "maybe it's interesting". They don't create much discussion.

Until now, I have primarily used this blog for long posts (thus explaining why I don't write much here). As my friends say "if it's more than 3 tweets, write a blog post".

So in the following months, I'll try to post short, not well researched but spontaneous articles, instead of ranting in 140 characters.

When networks began to expand and people saw the need for secure communication, they designed complex systems based on public key cryptography, that worked more or less. Problem: how do you trust that the key a server sent you is the right one? How can you make sure that it is not somebody else trying to impersonate that website?

Multiple solutions were proposed, and the most promising was a public directory of domain names and associated public keys, maintained by a peer to peer network named KeyCoin. It looked better than so called Web Of Trust solutions, because everybody could agree on what was the correct key for a given domain. As long as nobody hold 51% of the network, no change could happen without being validated by a lot of different peers. The network was maintained by 10000 enthusiast system administrators who took their task very seriously (after all, the security of the whole system depended on their honesty), and nobody had enough computing power to take over the network.

After a while, people began using the system, since it was directly integrated in their browsers, but they did not want to run a node on the network themselves. It was too bothersome, and they could trust the administrators. Also, they had to ask one of them to make a change everytime. The whole process was a bit artisanal.

In the meantime, some people demonstrated the 51% attack on networks of reduced size, and that worried people. They wanted a safe system, one that was not only relying on those sysadmins that could do anything. Who were they anyway? Running that system was still too complex for non technical too run it themselves anyway, so they did not worry enough. But some governments found that rewriting the truth of name/key matching was interesting. Maybe to catch pedophiles, terrorists, criminals. Or maybe to censor websites, I do not know, they told me it was for my own good.

Some smart person found a good solution: if controlling the whole system necessitated owning 51% of the system, the easiest way was to have a lot of machines, enough to counteract the sysadmins. That did not seem risky when people designed the system. Nobody could have enough computing power to take over the whole network, and there would be even more nodes every day.

Yet, that person got enough funding to install tens of thousands of machines and make them join the network. They even provided a nice enough interface for people and businesses to input their domain name and public key, as long as they paid some fees. The sysadmins welcomed him at first, since money coming in the system validated their ideas. Atfer a while, they started worrying, since none of them could keep up with the computing power, but that company asssured them it would never attain 51% of the network.

Other companies jumped on the bandwagon and started to profit from that new business opportunity. Governments started their own server farms to participate too. Problem: now that everybody (except the sysadmins) had a lot of computing power, nobody had enough to control the network entirely.

So they started making alliances. If a few major players work as a team, they can do whatever they want on the network. If one of them decided to try and replace a key on the ledger, others could help it. Of course, once they begun doing that, others wanted to participate. So they created a few rules to join their club. First, you needed to have enough machines. That was a good rule, because that made a big barrier to entry. You could not start as a small player. The other rules? You had to submit to an audit, performed by the other players. Yet another barrier to entry. And once they deemed you acceptable, you had to follow the requests of governments, which were arbitrarily refusing candidates.

Even with the big barriers to entry, a few hundred players came up, often backed by governments. Of course, all ended up in the same team, doing whatever they wanted, as long as nobody was complaining, because anytime one of them had something shady to do, all of them followed automatically.

Since building those big companies required money, they made their clients pay more and more, and to make it easier to accept, provided "premium" options where they show they trust you more, since they took the time to phone your company and ask a few questions.

Some found that big system too centralized, too obedient to states, and decided to fork it. There are separate public ledgers, but they do not come directly embedded in browsers, you need to integrate them yourself, and that's bothersome. Also, most of those networks have a few hundred nodes at best.

From a nice, decentralized, home made system, we ended up with a centralized system controlled by corporations and governments.

Now let me tell you about that system I designed. It is based on a concept named certificate, a cryptographically signed file that links the public key to a domain name. Now here's the catch: a certificate represents a key, and is signed by another key, which is represented by another certificate, and so on and so forth until a certificate that signs itself. That system is good, because you just have to embed the root certificate that your friends gives you, and you'll be able to verify the key of his websites, even if those keys change. And this, without even asking the public ledger, so that is a truly decentralized and more anonymous system! Nothing could go wrong with that, right?

I do not know who started this argument a few days ago. It feels like something coming from HN. Do you need to know mathematics to be a good programmer?

There is a lot of differing opinions. Maybe programming is a subbranch of mathematics, or programming is using mathematics. Or learning programming is closer to learning a new language. For me, saying that programming is about languages is like saying that literature is about languages. Sure, you need words to indicate concepts, some languages are better suited than others for that, and some concepts are better expressed in other languages. It is more like a hierarchy to me: philosophy formalizes concepts used by authors to write in common languages. Mathematics formalize concepts used by programmers to create code in common languages.

But this is besides the point.

This debate sparks outrage, since it touches a central point of our education, and one that is often not taught very well. "Look, I do not use geometry while writing a loop, so maths are pointless for me". A lot of developers will never learn basic algebra or logic and will never need it in their day job. And that's okay.

Programming is not a single profession anymore. Each and every one of us has a different definition. A mechanical engineer working on bridges, another on metallic parts for cars and another one on plastic toys all have different needs, different techniques for their job, although the fundamental basis (evaluating breaking strength, time of assembly, production costs) is the same. That does not make one of these jobs worth more than the other.

The real problem is that we are still fighting among ourselves to define what our job is. The other pointless debate, about software being engineering, science or craft, is evidence of that. And it will stay hard to define for a long time.

We are in a unique position. Usually, when a new field emerges, either tinkerers are launching it and later, good practices are studied to make it engineering, or scientists create it, then means of production become cheaper and crafters take over.

Computers were started by scientists, but the ease of access gave crafters a good opportunity to take over. But that does not mean research stopped when people started coding at home. So now, in a relatively new field (less than a century), while we are still exploring, we have a very large spectrum of jobs and approaches, from the most scientific to the most artistic kind. And that is okay. More world views will help us get better at our respective jobs.

So, while you are arguing that the other side is misguided, irrealistic or unrigorous, take time to consider where they come from. They do not have the same job, and that job can seem pointless to you, but they can be good at it, so there is probably something good you can learn from their approach. The only thing you should not forgive from the other side is the lack of curiosity.

There is always a new project around self hosting email and PGP, or building a new encrypted chat app. Let's say it aloud right now: those projects are not trying to improve the situation, they just want to build their own. Otherwise, they would help one of the 150 already existing projects. Worse, a lot of those try to build a business out of it, and end up making a system where you need to completely trust their business (as a side note, building a business is fine, but just be honest about your goals).

There is always a new project around self hosting email and PGP, or building a new encrypted chat app. Let's say it aloud right now: those projects are not trying to improve the situation, they just want to build their own. Otherwise, they would help one of the 150 already existing projects. Worse, a lot of those try to build a business out of it, and end up making a system where you need to completely trust their business (as a side note, building a business is fine, but just be honest about your goals).